For centuries, humanity’s quest to process information faster and solve complex problems has driven the evolution of computing devices. What started with beads on a frame has now reached the mind-bending realm of quantum mechanics.

The Humble Beginnings — The Abacus

Dating back over 2,500 years, the abacus was humanity’s first mechanical aid for calculation. Simple yet effective, it allowed merchants and scholars to perform arithmetic operations long before written numerals became widespread.

🧮

Long before electricity or silicon chips, there was the abacus — a simple, elegant device that marked the beginning of mankind’s relationship with computation.

Originating over 2,500 years ago, the abacus was used by ancient civilizations like the Chinese, Greeks, and Romans to perform arithmetic. Constructed with a wooden frame and movable beads on rods, it allowed users to carry out addition, subtraction, multiplication, and division with impressive speed and accuracy.

More than just a counting tool, the abacus represents the human desire to enhance memory, reduce error, and think beyond mental limits. Even today, it’s still used in some parts of the world, proving that simple ideas can be incredibly enduring.

The Dawn of Modern Computing

In the 19th century, Charles Babbage conceptualized the Analytical Engine, laying the groundwork for programmable machines. Although never fully built in his time, his vision inspired generations.

The Digital Revolution

The 20th century brought monumental shifts:

- ENIAC (1945): The first general-purpose electronic computer.

- Transistors (1947): Replacing bulky vacuum tubes, enabling smaller, faster devices.

- Microprocessors (1971): Compressing computing power onto a single chip, leading to personal computers.

Enter the Quantum Era

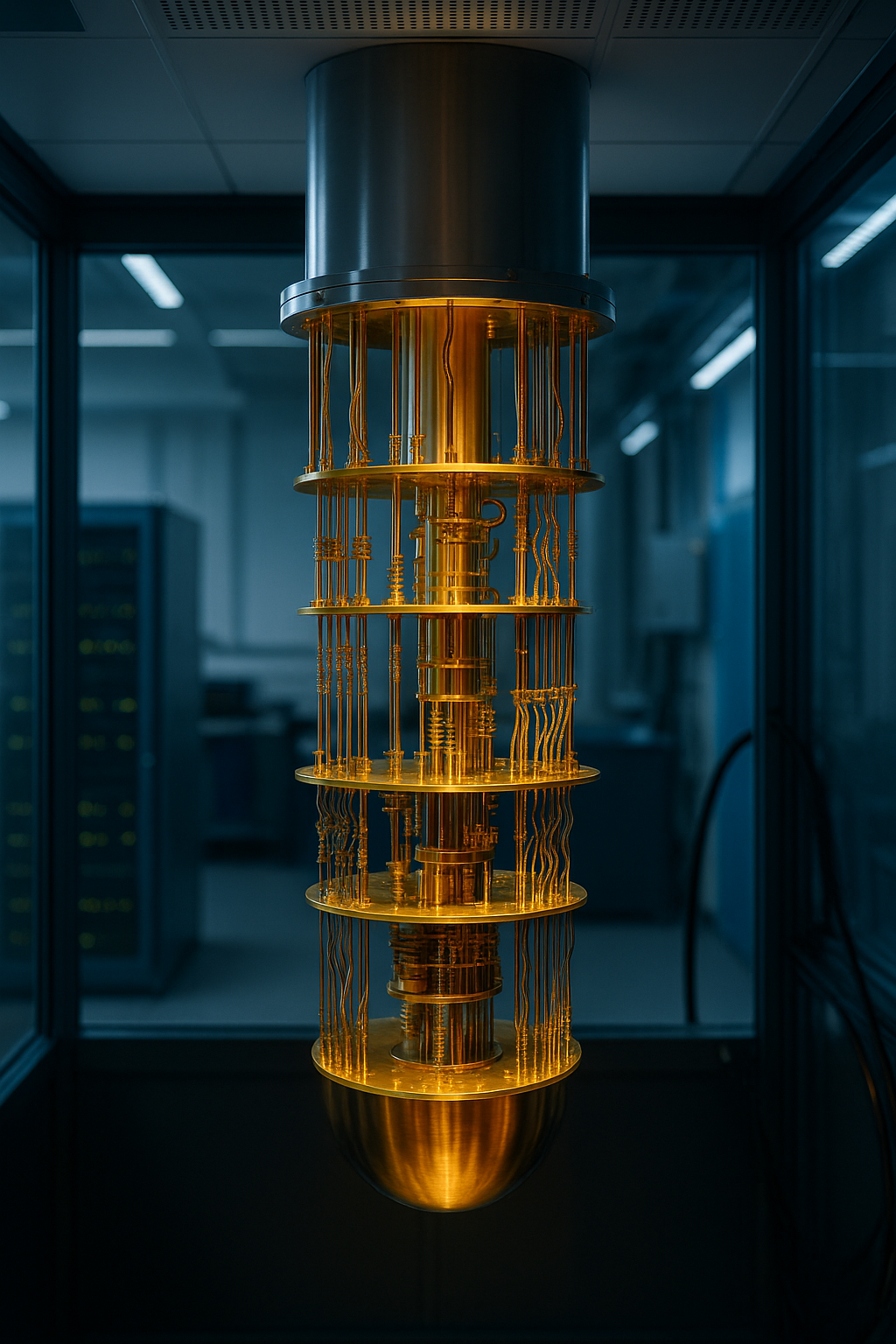

Today, we stand on the threshold of a new frontier — Quantum Computing. Unlike classical computers that use bits (0 or 1), quantum computers use qubits, harnessing superposition and entanglement. They promise breakthroughs in fields like cryptography, drug discovery, and artificial intelligence.

While the abacus reflects humanity’s first step toward computational thinking, quantum computers are redefining what’s possible in the digital age.

At their core, quantum computers leverage the principles of quantum mechanics — particularly superposition and entanglement — to process information in ways that classical computers simply can’t. Instead of bits (0s and 1s), quantum computers use qubits, which can exist in multiple states at once.

This allows quantum systems to solve incredibly complex problems — such as simulating molecular structures, optimizing massive datasets, or breaking cryptographic codes — exponentially faster than traditional machines.

Though still in the early stages of development, companies like IBM, Google, and startups around the world are racing to bring quantum computing into mainstream use. It’s not just a leap in speed — it’s a fundamental shift in how we understand and perform computation.